JONATHAN EATO

Recording and Listening to Jazz and Improvised Music in South Africa

I’m interested in musical priorities. It’s been a running theme for me, mainly because I think if you listen to a musician’s musical priorities you have a better chance of understanding the music on its own terms, rather than inadvertently imposing an outside vision on it. Nothing wrong with outside visions in recording, or deliberate subversions of established practice comes to that – think of Lee Scratch Perry’s innovations at Black Ark, Phil Spector’s ‘wall of sound’ etc. – but you have to know that this is what they are.

Anyway, if recording is about one thing, it’s about listening. Listening to how a musical source sounds in a room. Listening to the equipment you have access to – microphones, mic preamps, outboard, console, plugins, converters – in many different situations and on many different sources in order to know what effect certain technical choices will have. It’s about listening to how a musical source sounds through the signal chain you’ve set up. Listening to how all the microphones you’ve set up sound together. Listening to the full journey of a recorded sound, right through onto the tape (or in the digital age, through the A to D converters). Listening to how all that compares to what is being played live in the room. And, having listened to what the musicians are wanting to achieve, listening out for any potential gaps between result and intention.

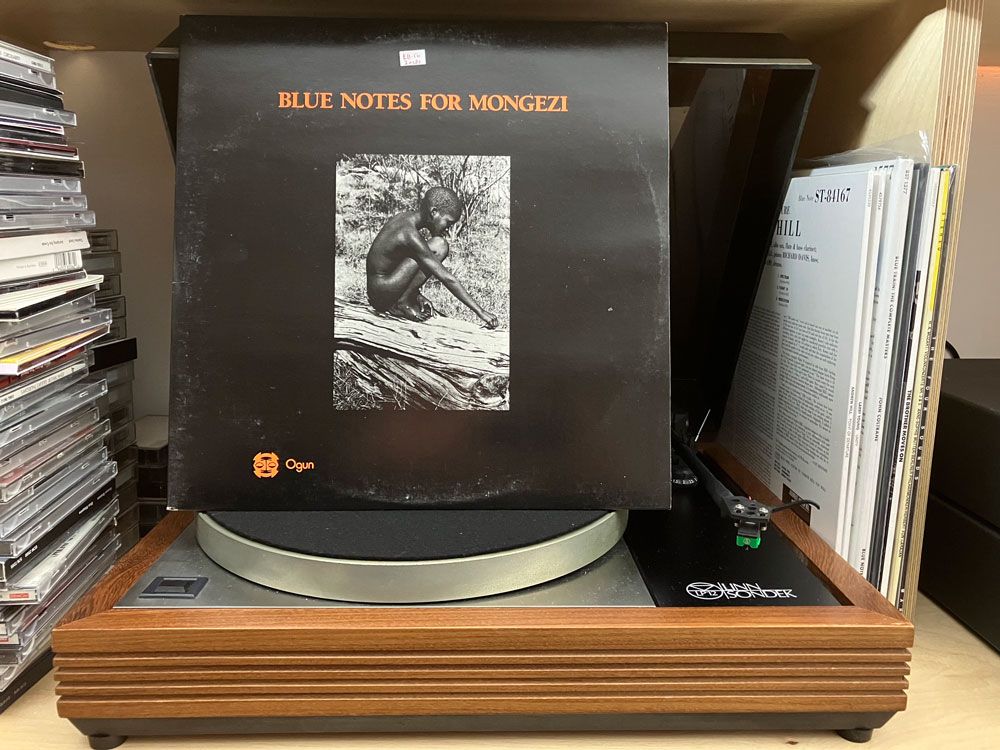

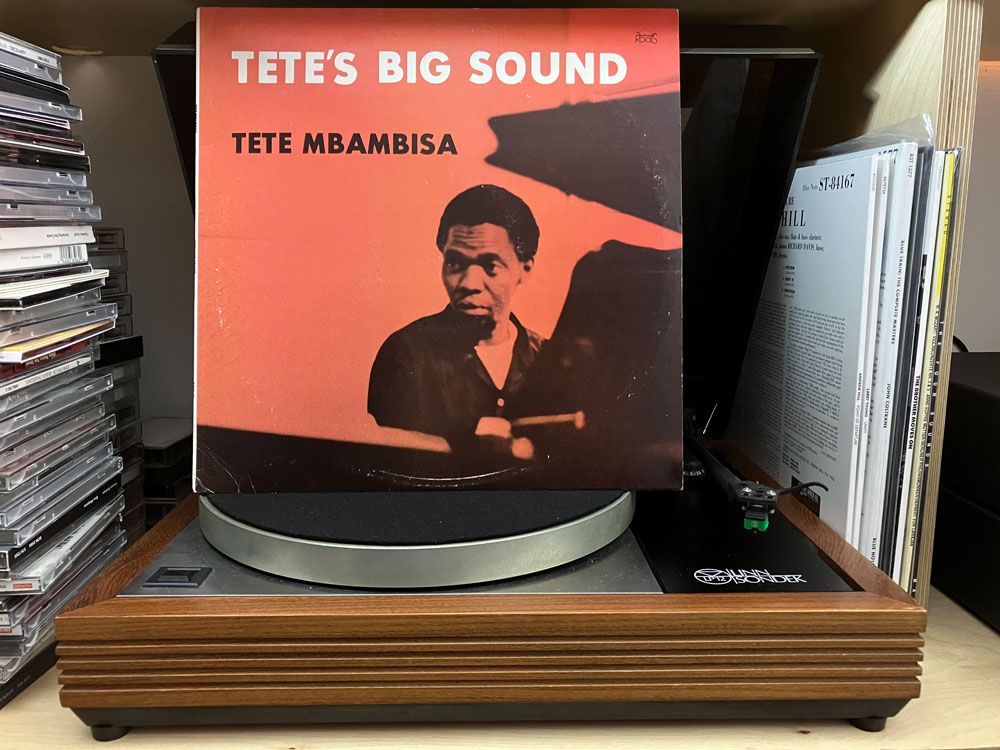

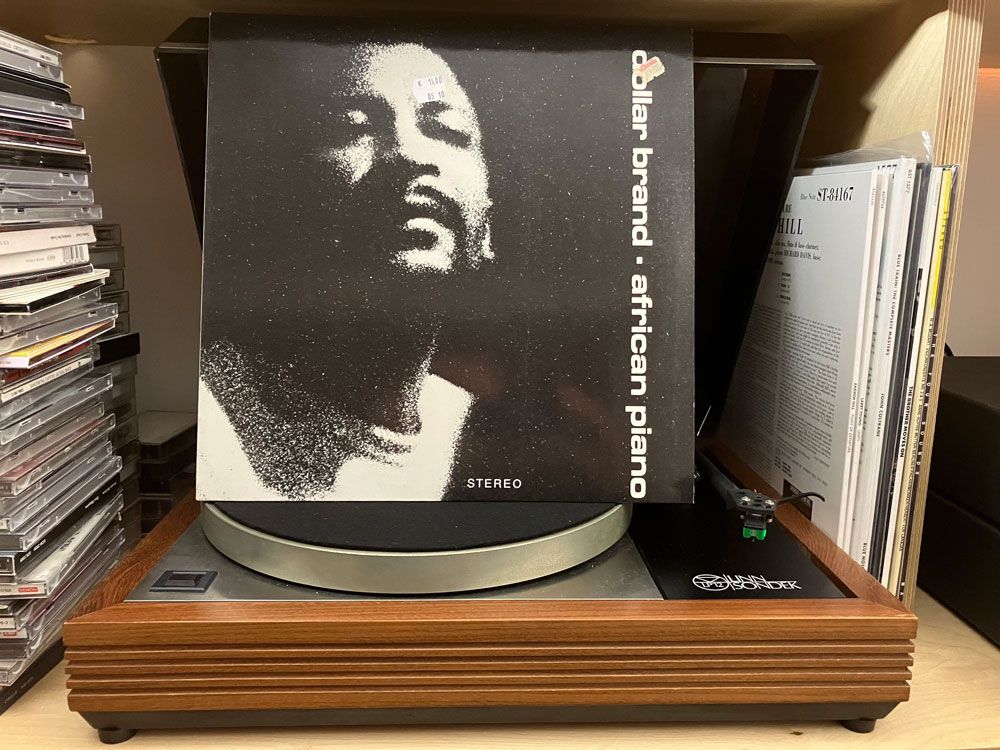

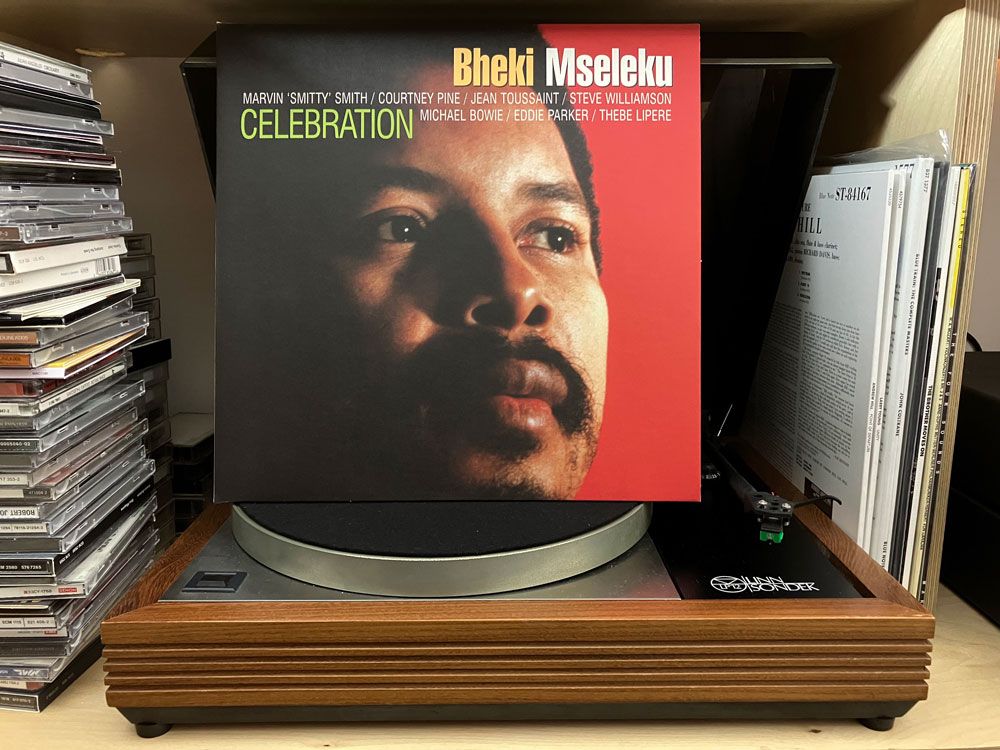

I also listen to a lot of recordings. Mainly so-called jazz recordings, and a lot of South African jazz recordings, but not exclusively. This listening is where I assemble my context. Additionally I listen to a small selection of recordings that are important to me over, and over again. On vinyl, on CD, as high-resolution files, as lossy MP3s, as digital streams, and on the radio. I listen on various types of headphones, on a stereo in my front room, in the car, walking down the street, and sitting on the bus. I listen on laptop and phone speakers, and in an acoustically treated studio (where I use a monitor controller to quickly switch between a calibrated main system of two main monitors and sub, a pair of the ubiquitous NS10s, and a mono grotbox). I see this repetitious listening as the closest thing I’ll ever get to listening as someone else; I can’t listen as someone else, but I can listen as me in different environments.

I also try to listen in my imagination; how would I like something to sound without the real-world constraints of different instruments competing for a finite frequency band.

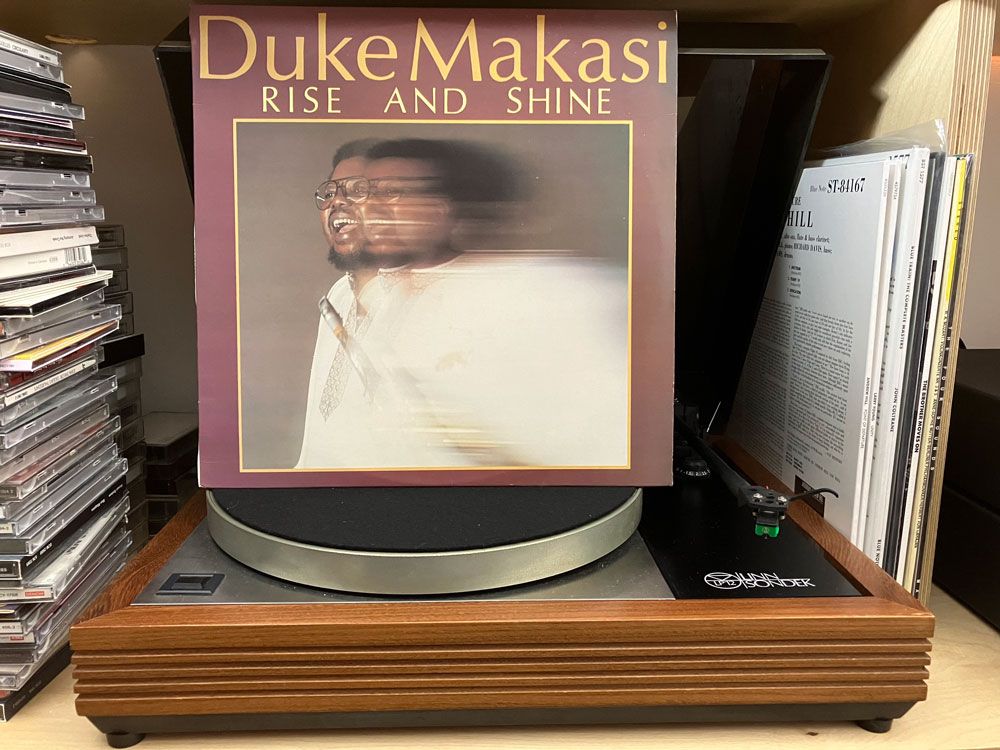

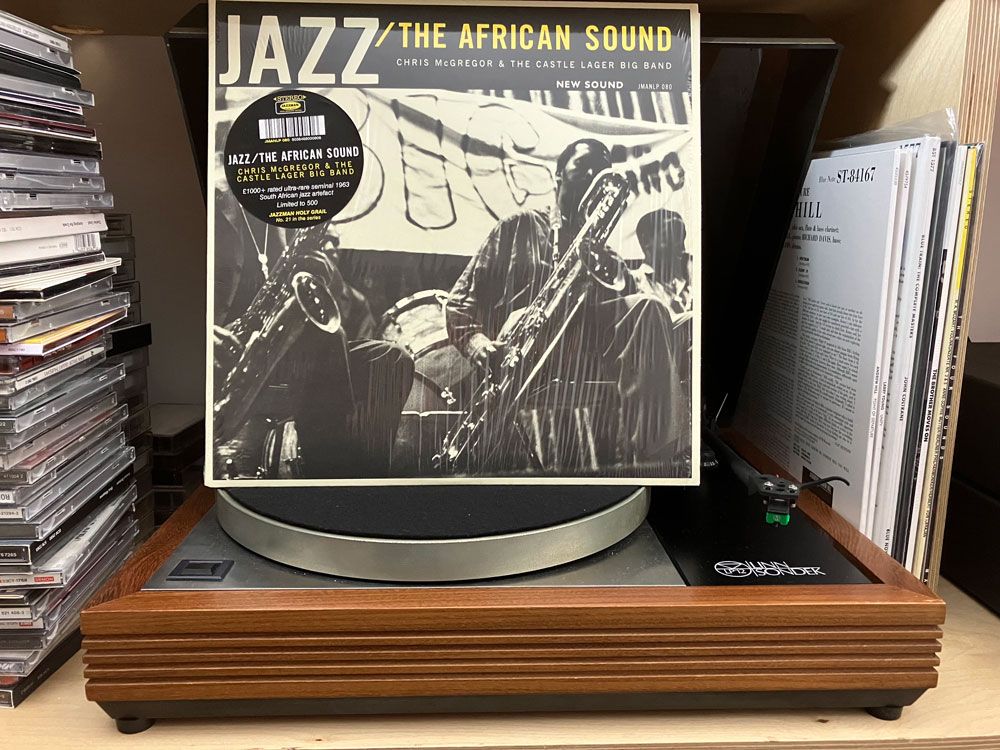

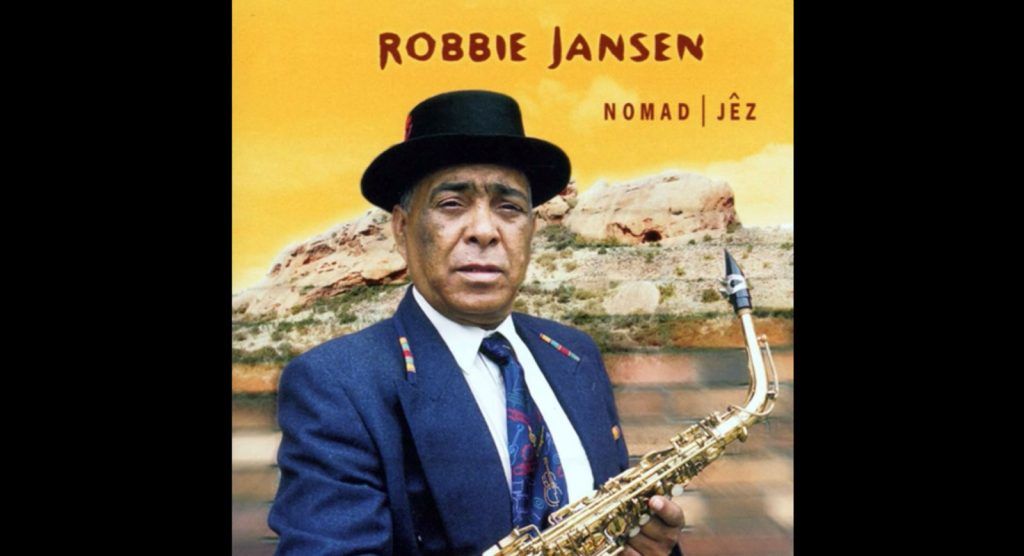

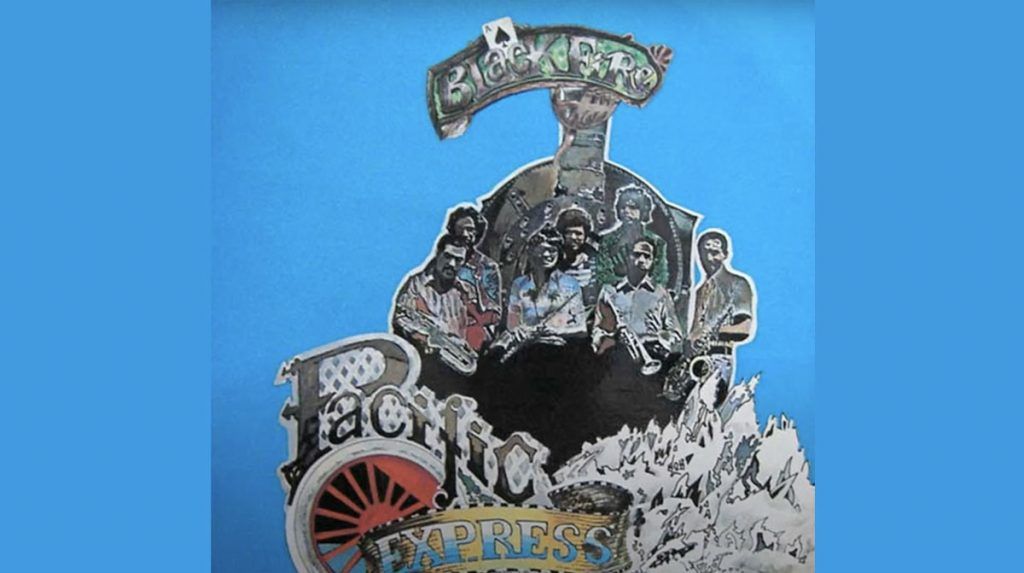

The context I’m trying to assemble is also in relation to the idea of jazz (and improvised music). That this covers a hugely diverse range of musical practices has been pointed out many times. And so-called jazz musicians are also engaged in a range of musical projects – Robbie Jansen recorded with The Rockets, and Pacific Express, both of which are sound-worlds apart from his Vastrap Island or Nomad Jez albums. And, especially over the course of a long career, musicians’ styles can change and develop. So, even when we have our context, the questions for sound recordists go something like: should Robbie Jansen’s saxophone be recorded in the same manner for Vastrap Island as it was for Pacific Express? Should we be looking to the drum sound on The Blue Notes’ early recordings for the SABC as a reference when considering a recording set up for Louis Moholo-Moholo’s Five Blokes over half a century later (even accounting for changes of recording technology)?

Regardless, there remain some important commonalities over time regarding this music. It is, to a large extent, a music that is still recorded live. Yes, there are overdubs on contemporary recordings, and of course there are some wonderful projects that utilise more of a ‘pop production’ process where individual musicians track individually. But my guess is that these are either by way of additions to a ‘live in the room’ ensemble recording, or contemporary exceptions to the rule, rather than a choice that aims to impose a specific sound on a recording. Anyway, the immediate practical implication of the live recording model is having a recording room big enough to fit a group of musicians.

And at this point the room becomes part of the sonic equation – for better or worse.

An interesting case study of this in terms of jazz recording is the move of Rudy Van Gelder’s studio from his parent’s living room in their Hackensack house, to the much larger purpose-built room at Englewood Cliffs This not only changed the sound of the recordings Van Gelder made for Blue Note Records, amongst others, it obliged Van Gelder to adjust his engineering practice and also had an effect on how musicians would play together in the room (musical decisions improvised in a reverberant acoustic are quite a different proposition from those made in a dry acoustic).

But if musicians’ sounds and/or techniques can change, so can those of a recording engineer. I don’t believe the differences in recorded sound between McCoy Tyner’s Tender Moments and Abdullah Ibrahim’s Water From An Ancient Well – both medium sized acoustic jazz bands, both recorded by Van Gelder in the same room at Englewood Cliffs – are only down to technological developments or the differences between Tyner’s and Ibrahim’s musical vision.

Whilst pop music recording practice has largely evolved away from recording in live spaces to a much greater degree than jazz, classical music recording practice is perhaps the most invested in recording the sound of the room. This leaves jazz – as a music recorded ‘live in the room’ – somewhere in-between, even before mix decisions are made regarding artificial reverb (think of ECM Records producer Manfred Eicher’s love of Lexicon reverb units, and then try and imagine Keith Jarrett’s European quartet recordings without reverb). When Louis Moholo-Moholo’s large ensemble project was set to perform in St. Peter’s Church, Mowbray (CT) in 2016 I was wondering what sort of recorded sound we might get. A lot of the music heard in churches is originally conceived with that kind acoustic space in mind, and, however itinerant jazz musicians have had to be, I don’t think many have specifically tailored their musical language for such spaces. (As it turned out St. Peter’s is quite heavily carpeted which helped, in my opinion, to align the acoustic much more to the music.)

Another commonality is that there’s also often a rhythm section in jazz, and across many styles of the music the rhythm section tends to function according to the development of the rhythm section in jazz. This is perhaps an example of musicians encoding their priorities into the music, and in my view there’s an obligation on the sound recordist to be able to hear and decode these priorities. In this example I would argue that it’s not possible to represent jazz on record without an appreciation of this rhythm section function; how the bass and piano / guitar work together to complete the harmony, especially where rootless or ambiguous voicings are used, how the rhythm section works as a whole to define the rhythmic matrix, and how both these combine to become ‘the rhythm section sound’. There is no rhythm section equivalent in classical music, and, in other musics with a rhythm section, they operate on different priorities to jazz. For example, kick and snare rule in rock – they contribute a great deal to the sound of a song – and much of the readily available information on how to mic a kit, how to situate it in the mix, derives from the sound and function of the drum kit required by rock. But jazz drummers rarely use the kick and snare in the same way as rock drummers, so there’s an adjustment to be made in recording and mixing.

That’s not to dismiss the kick in recording jazz; for one it was bebop drummer Tiny Kahn who first persuaded the legendary sound recordist Al Schmitt to put a mic on the kick. And there are examples of grooves in jazz recordings where, in my opinion, the kick sound might be required to be differentiated between tracks on an album.

An example of this is for the groove developed by Gilbert Matthews for Chris McGregor’s Brotherhood of Breath composition Country Cooking on the album of the same name; originally this was the first track on side 1 and – in my opinion – demands quite a different recorded sound from the kick on tracks 2 and 3 (Bakwetha and Sweet As Honey).

But what about musical priorities as spoken about by musicians. Consider a couple of quotations from drummer, composer and pioneer in improvised music, Louis Moholo-Moholo. They were both made at the SASRIM Composers’ Panel, held at the University of Stellenbosch in 2010. Bra Louis said:

Any piano will do for me actually… I use that as a percussion.

With this music I am in the front and the saxophones are in the back. Like, you know, automatically I have a band, and it could be a trio band. I will always be in the back, it doesn’t matter whether I am a band leader or not. It’s like piano, bass and drums. It’s never like drums, piano and… you know. Let the piano be in the back for a while.

I don’t wish to imply that the recorded sound of the piano doesn’t matter to Bra Louis, and I certainly recognise that he’s worked with the Who’s Who of pianists. But there are things in these two statements that could usefully inform recording decisions. Considering that both drum kit and piano are very often recorded in stereo, how should they both sit in the stereo field in his music? Is it appropriate to have the ultra-wide piano perspective of contemporary recordings with the lowest notes at the left-most edge and the highest notes way over to the right? Don’t get me wrong, it’s an approach to recording the piano that I’m very attracted to and which I have sought out when recording pianists I especially admire, such as Nduduzo Makhathini, and Mário Laginha.

But listening to Bra Louis’ words, I think I would at least search for other solutions for the piano in an ensemble he was leading.

And writing that makes me think.

Mix engineers regularly consider depth, i.e. the front-back perspective, as well as the left-right. Depth is often achieved through use of delays and reverb. But with physically large instruments such as the piano or the drum kit, the nearer we get to them in real life the more extreme our spatial experience. Were I to lean over Bra Louis’ kick drum whilst he was playing I would experience the cymbals on his kit as if hearing them almost exclusively through one ear or the other. Conversely, if I was a great distance from his kit I might struggle to hear any left-right differentiation for the various components of the kit. And yet whilst an engineer might routinely bring an instrument to the front or back of a band at various moments in a recording, I have never heard a matching change in width when a drum kit is brought forwards or further back. This might seem like an overly radical prospect (I’ve never tried it, but am certainly going to…) however if we are to really listen to musicians who have proved to be radical musical innovators, perhaps there is an onus on sound recordists and mix engineers to acknowledge some of that radical musicality in their own practice?

Credits: Carousel 2: Photos by Jonathan Eato; Carousel 4: Camera by Minyung Im